Imagine you are a property developer in a booming digital city. You have two ways to house your residents (your applications). You could build a massive, sturdy apartment complex where every tenant has their own heavy-duty front door, plumbing, and electrical circuit—rock-solid but expensive and slow to renovate. Or, you could provide a fleet of sleek, modular “tiny homes” that share the city’s core utilities but can be moved across the country in a single afternoon.

If you’ve spent any time in IT operations or DevOps over the last decade, you’ve likely heard the debate countless times: virtual machines vs containers. It often starts as a technical comparison and quickly turns into a philosophical one.

For decades, we relied on the “heavy-duty apartment” of Virtual Machines (VMs) to keep our servers from becoming a tangled mess of conflicting software. But as the clock ticks through 2026, the landscape has shifted. Between the seismic licensing changes following Broadcom’s acquisition of VMware and the relentless march of cloud-native development, understanding the nuance between these two technologies isn’t just for system admins anymore—it’s a survival skill for any tech-forward organization.

Today, Virtualization and Containerization (VMware, Docker) are no longer competing ideas. They are foundational building blocks that often work best together. Understanding where each shines—and where it struggles—is essential for designing resilient, scalable infrastructure.

The Bedrock: Understanding Virtualization through VMware

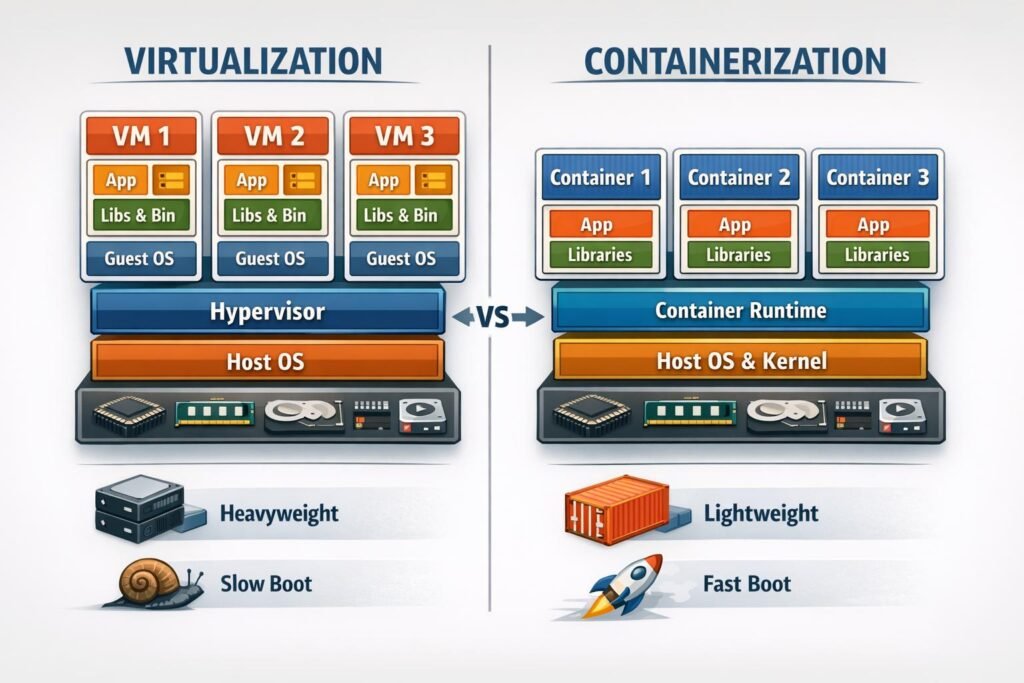

To appreciate where we are going, we have to look at the giant that paved the way. Virtualization is the process of using software to create an abstraction layer over computer hardware. This allows a single physical server to be sliced into multiple “Virtual Machines.”

The Role of the Hypervisor

At the heart of the VMware ecosystem is the Hypervisor (specifically ESXi). Think of the hypervisor as a digital referee. It sits directly on the hardware and doles out CPU, RAM, and storage to various Guest Operating Systems. Each VM is a “full-fat” environment; it thinks it’s a real computer, complete with its own copy of Windows or Linux.

Why VMware Still Holds the Throne (For Now)

Even in 2026, VMware remains the gold standard for legacy stability. If you are running a massive, monolithic database or a highly sensitive financial application, the hardware-level isolation of a VM provides a “blast radius” protection that is hard to beat. If one VM crashes or gets compromised, the hypervisor ensures the neighbor is none the wiser.

However, the “Broadcom Effect” has changed the conversation. Following the 2023 acquisition, many enterprises have faced significant shifts in licensing models, moving from perpetual licenses to subscription-heavy bundles. This has forced a widespread re-evaluation: Do we really need a full VM for every small task?

The Agile Revolution: Containerization with Docker

If VMware is the digital landlord, Docker is the logistics expert. Containerization doesn’t try to simulate a whole computer. Instead, it packages just the application and its dependencies—libraries, configuration files, and runtime—into a single, lightweight “image.”

The Shared Kernel Magic

Unlike a VM, a container does not carry its own Operating System (OS). It shares the host’s OS kernel. This might sound like a small detail, but the implications are massive:

- Speed: A VM might take two minutes to boot up because it has to load an entire OS. A Docker container starts in milliseconds.

- Efficiency: You can fit hundreds of containers on a server that might only handle a dozen VMs.

- Portability: The “it works on my machine” excuse died with Docker. Because the container carries its environment with it, it runs the same on a developer’s laptop as it does in a high-scale production cloud.

The Docker Ecosystem in 2026

By now, Docker has evolved from a simple tool into a massive platform. While competitors like Podman have gained ground, Docker’s integration with Kubernetes (the orchestrator that manages these containers at scale) makes it the default starting point for modern “DevOps” workflows.

Comparison: Virtualization vs. Containerization

To help you decide which tool fits your project, let’s look at the raw data.

| Feature | Virtualization (VMware) | Containerization (Docker) |

| Isolation | High (Hardware-level) | Medium (Process-level) |

| Guest OS | Full OS per VM | Shares Host OS kernel |

| Startup Time | Minutes | Milliseconds |

| Resource Usage | High (GBs of RAM/Storage) | Low (MBs of RAM/Storage) |

| Portability | Limited by Hypervisor/OS | High (Cross-cloud/local) |

| Best For | Monolithic apps, strict security | Microservices, rapid scaling |

When to Use VMware, Docker, or Both

Choose VMware When:

- Regulatory compliance is strict

- Applications require full OS control

- Operational stability outweighs deployment speed

Choose Docker When:

- Development velocity is a priority

- You’re building microservices or APIs

- CI/CD automation is central to delivery

Choose Both When:

- Running containers at enterprise scale

- Operating in hybrid or multi‑cloud setups

- Balancing agility with governance

Most modern architectures fall into this third category, reflecting how Virtualization and Containerization (VMware, Docker) increasingly complement rather than compete.

Deep Insight: The “Noisy Neighbor” and the Security Gap

One of the most frequent debates in Virtualization and Containerization (VMware, Docker) is the trade-off between performance and security.

In a VMware environment, the isolation is “hard.” Because there is a hypervisor between the VM and the hardware, it is extremely difficult for an attacker to “escape” one VM and infect another. This makes it the preferred choice for multi-tenant environments where you don’t trust your neighbors.

In Docker, because everyone shares the same “brain” (the kernel), the risk is slightly higher. If a vulnerability exists in the Linux kernel itself, a compromised container could potentially gain access to the host. However, in 2026, technologies like gVisor and Kata Containers have blurred these lines, offering “VM-like” isolation for containers without the massive overhead.

Key Insights Most Articles Miss

1. Containers Didn’t Kill Virtual Machines

Despite headlines, containers did not replace virtualization. Industry reports show most enterprises now run containers inside virtual machines, combining Docker’s agility with VMware’s isolation.

This hybrid approach simplifies security, multi‑tenancy, and cloud portability.

2. Performance Isn’t the Only Metric

Yes, containers often outperform VMs in startup time and density. However, performance benchmarks from academic and industry studies confirm that tuning and workload type matter more than the technology choice itself.

3. Operational Maturity Matters

VMware environments benefit from decades of operational tooling. Containers demand cultural shifts—monitoring, security, and troubleshooting require new habits and platforms like Kubernetes.

Teams that struggle with containers usually face process gaps, not technical limitations.

The 2026 Hybrid Reality: Why Choose One?

The biggest “fresh perspective” we can offer is this: The war between Virtualization and Containerization (VMware, Docker) is over, and the winner is Hybridization.

Modern infrastructure isn’t an “either/or” proposition. We are seeing a massive trend toward KubeVirt and OpenShift Virtualization, where organizations run their old-school VMs inside a container orchestration platform.

The Evolution of the “Stack”

- The Infrastructure Layer: Bare-metal servers or public cloud (AWS/Azure).

- The Virtualization Layer: VMware or Proxmox provides the stable base.

- The Container Layer: Docker/Kubernetes runs on top of those VMs.

This “nested” approach gives you the best of both worlds. You get the iron-clad security and management tools of VMware with the developer-friendly agility of Docker. According to recent industry reports, over 90% of global organizations now run containerized applications in production, but nearly all of them still maintain a significant VM footprint for their core databases.

Personal Insight: The Human Cost of Migration

Through our experience in the field, we’ve noticed that the biggest hurdle isn’t the code—it’s the culture. Moving from VMware to Docker requires a fundamental shift in how teams think about “state.”

VMs are often treated like Pets: we give them names, we patch them, we care for them when they are sick. Containers are Cattle: if one misbehaves, we kill it and start a new one instantly. If your team is still “massaging” servers and manually logging in to fix configs, no amount of Dockerizing will save you. You must embrace the philosophy of Infrastructure as Code.

Conclusion: Future-Proofing Your Strategy

The choice between Virtualization and Containerization (VMware, Docker) depends entirely on your goals for 2026 and beyond.

- Choose VMware if you need robust isolation, are managing legacy “heavy” applications, or require the sophisticated management features that Broadcom’s ecosystem provides for massive enterprise fleets.

- Choose Docker if you are building new applications, need to scale rapidly in the cloud, or want to empower your developers to move from “code to production” in minutes rather than days.

The most successful architects we see today aren’t “VM People” or “Docker People.” They are Solution People who recognize that a VM is a great place for a database to live, and a container is the perfect vehicle for the API that talks to it.

Pingback: Roadmap to the Cybersecurity world - The Cyber Server